Apache Hadoop Review

OUR SCORE 98%

OUR SCORE 98%

- What is Apache Hadoop

- Product Quality Score

- Main Features

- List of Benefits

- Technical Specifications

- Available Integrations

- Customer Support

- Pricing Plans

- Other Popular Software Reviews

What is Apache Hadoop ?

Apache Hadoop is an open-source framework and software library designed for gathering, storing, and analysis of the huge amount of data sets. It is a highly scalable and reliable computing technology that is capable of processing large data sets across servers, thousands of machines, and the cluster of computers in a distributed way. The system’s architecture is made up of core components that contain a distributed file system namely HDFS (Hadoop Distributed File System) as well as a processing component and programming paradigm called Map/Reduce. The distributed file system keeps data files across the machines by separating them into large blocks and then distributing them across nodes in the cluster of computers or servers.Product Quality Score

Apache Hadoop features

Main features of Apache Hadoop are:

- Scale Up From Single Servers

- Distributed File Systems

- Distributes Blocks of Files

- Divides Large Data Files

- Processing of Large Data Sets

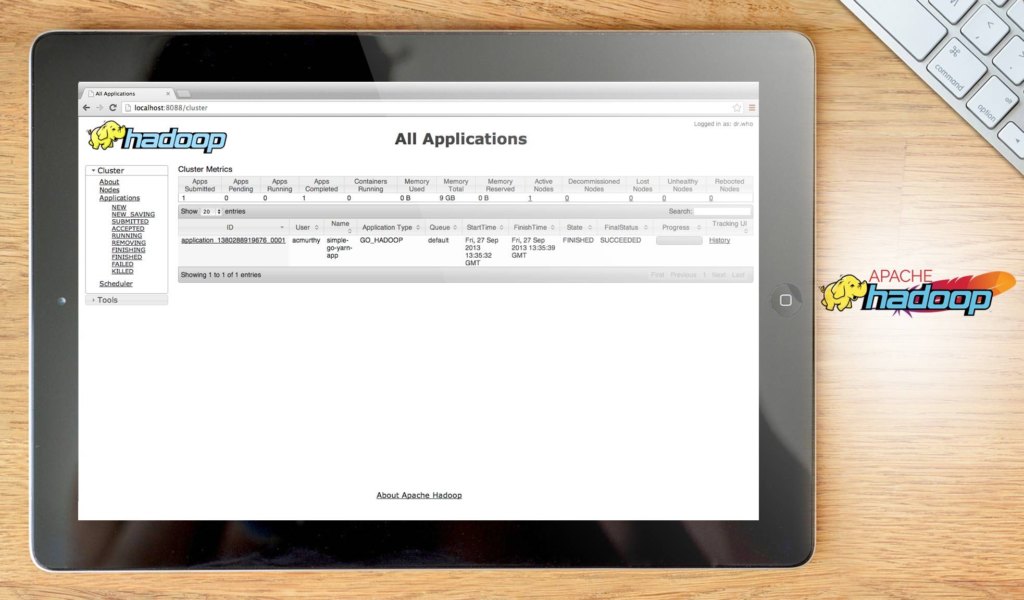

- Cluster Management

- Job Scheduling

- Computation Operations/Tasks

- Master/Slave Architecture

- Fault-Tolerance Capability

- Parallel Computing Framework

Apache Hadoop Benefits

The main benefits of Apache Hadoop are the big data technology that the system uses for handling explosions in data, highly scalable framework that results to high availability, reliable HDFS, and a distributed computing component that’s built based on the Apache YARN. Here are more details:

Big Data Technology for Managing Explosions in Data

Since Apache Hadoop is an example of big data technology, it provides technology, framework, and ecosystem that are created to process huge amounts of data. Organizations and companies that are growing and evolving will have to deal with data explosions. When those happen, they would need to manage and process huge data sets and meet all the challenges associated with their technological world turning more and more to be information driven.

High Scalability of the Framework that Ascertains High Availability

Big data technology is a solution with high scalability. Apache Hadoop is able to automatically scale up to match the number of machines and servers required for processing, storing, and analysis of expanding large data sets. The good thing about this process is that the computing technology removes reliance on hardware whenever the need to scale up arises. It disperses large data sets throughout clusters of machines and servers and then handles the intensive parallel computing on the pertinent clusters.

Dependable Distributed File System

Apache Hadoop provides a distributed file system called Hadoop Distributed File System or simply HDFS. The HDFS divides large data files into several blocks arranged sequentially. Afterward, it stores and distributed the blocks throughout the large clusters of machines or servers. As a distributed file system, HDFS is highly reliable; it has fault tolerance function, an attribute that empowers a system to continue operation even if faults or failures within its components are being experienced.

Computing Component Based on Apache YARN

Aside from the HDFS, Apache Hadoop also contains a major component named Map/Reduce. The framework uses Apache YARN system in order to manage distributed parallel computing throughout Hadoop clusters. The Apache YARN system is a job scheduling and cluster management tool created by the Apache Software Foundation.

Technical Specifications

Devices Supported

- Web-based

- iOS

- Android

- Desktop

Customer types

- Small business

- Medium business

- Enterprise

Support Types

- Phone

- Online

Apache Hadoop Integrations

The following Apache Hadoop integrations are currently offered by the vendor:

- Spark

- HBase

- ZooKeeper

- OpenStack Swift

- Hive

- Ambari

- Chukwa

- Avro

- Cassandra

- YARN

- Tez

- Azure Blob Storage

- Amazon S3

- Pig

- Mahout

Video

Customer Support

Pricing Plans

Apache Hadoop pricing is available in the following plans: