Hadoop HDFS Review

OUR SCORE 80%

OUR SCORE 80%

- What is Hadoop HDFS

- Product Quality Score

- Main Features

- List of Benefits

- Technical Specifications

- Available Integrations

- Customer Support

- Pricing Plans

- Other Popular Software Reviews

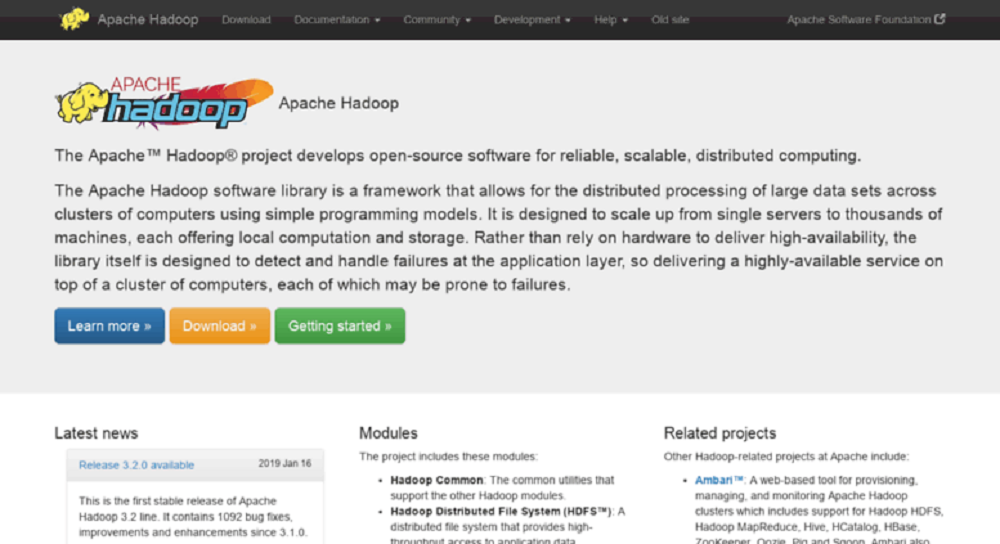

What is Hadoop HDFS?

Hadoop DFS is built with the distributed file system design and operates on commodity hardware. The Hadoop Distributed File System or HDFS serves as the app's main storage system. It is capable of handling an enormous amount of data. To store pools of big data, the system places the files across numerous different machines. The files are then stored in a redundant manner, ensuring that no data will be lost in case of system failure. Moreover, HDFS supports tools for essential big data analytics and offers high-performance access to valuable data across clusters of Hadoop. This software is developed using low-cost hardware and is highly fault-tolerant.Product Quality Score

Hadoop HDFS features

Main features of Hadoop HDFS are:

- Browser Interface

- Cluster Rebalancing

- Space Reclamation

- FS Shell

- File System Namespace

- Staging

- Data Replication

- Data Blocks

- DFSAdmin

- Data Disk Failure

- Data Integrity

- Replication Pipelining

Hadoop HDFS Benefits

The main benefits of Hadoop HDFS are its support for apps with big data, cost-effectiveness as a result of being an open-source software, less to no risk of losing data in the process, and parallel processing. Here are more details:

Supports Big Data

HDFS is designed to support apps with big sets of data like individual files that number into terabytes. This platform leverages a master/slave architecture. Each cluster contains a single NameNode that handles file system operations. It also supports DataNodes that administer the storage of data on singular computer nodes.

Open-source Software

HDFS can be implemented by any organization in need of this type of system without having to deal with support expenses as well as licensing. Users, therefore, can store and process more data and orders of magnitude per dollar compared to conventional NAS or SAN system, allowing them to enjoy more monetary benefits.

No Data Loss

HDFS is implemented on affordable commodity hardware, which means server failure can happen every now and then. But users do not have to worry because the software is designed in such a way that the consequences and risk of catastrophic failure will be avoided or eliminated. The software transfers data between compute nodes rapidly so even when several nodes fail simultaneously, the system will continue to function.

Parallel Processing

In addition, the software supports parallel processing which ensures that the process will not be interrupted by any failure. HDFS breaks the acquired information down into numerous different pieces then delivers them to various nodes in a cluster. Moreover, the file system replicates each piece of information multiple times. The copies will then be delivered to individual nodes and at least one copy will be placed on a different server rack. This allows constant processes while the failure or issue is being fixed.

Technical Specifications

Devices Supported

- Web-based

- iOS

- Android

- Desktop

Customer types

- Small business

- Medium business

- Enterprise

Support Types

- Phone

- Online

Hadoop HDFS Integrations

The following Hadoop HDFS integrations are currently offered by the vendor:

No information available.

Video

Customer Support

Pricing Plans

Hadoop HDFS pricing is available in the following plans: